Against AI but Not Against Tech? Pick a Side or Send Your Comments by Pigeon.

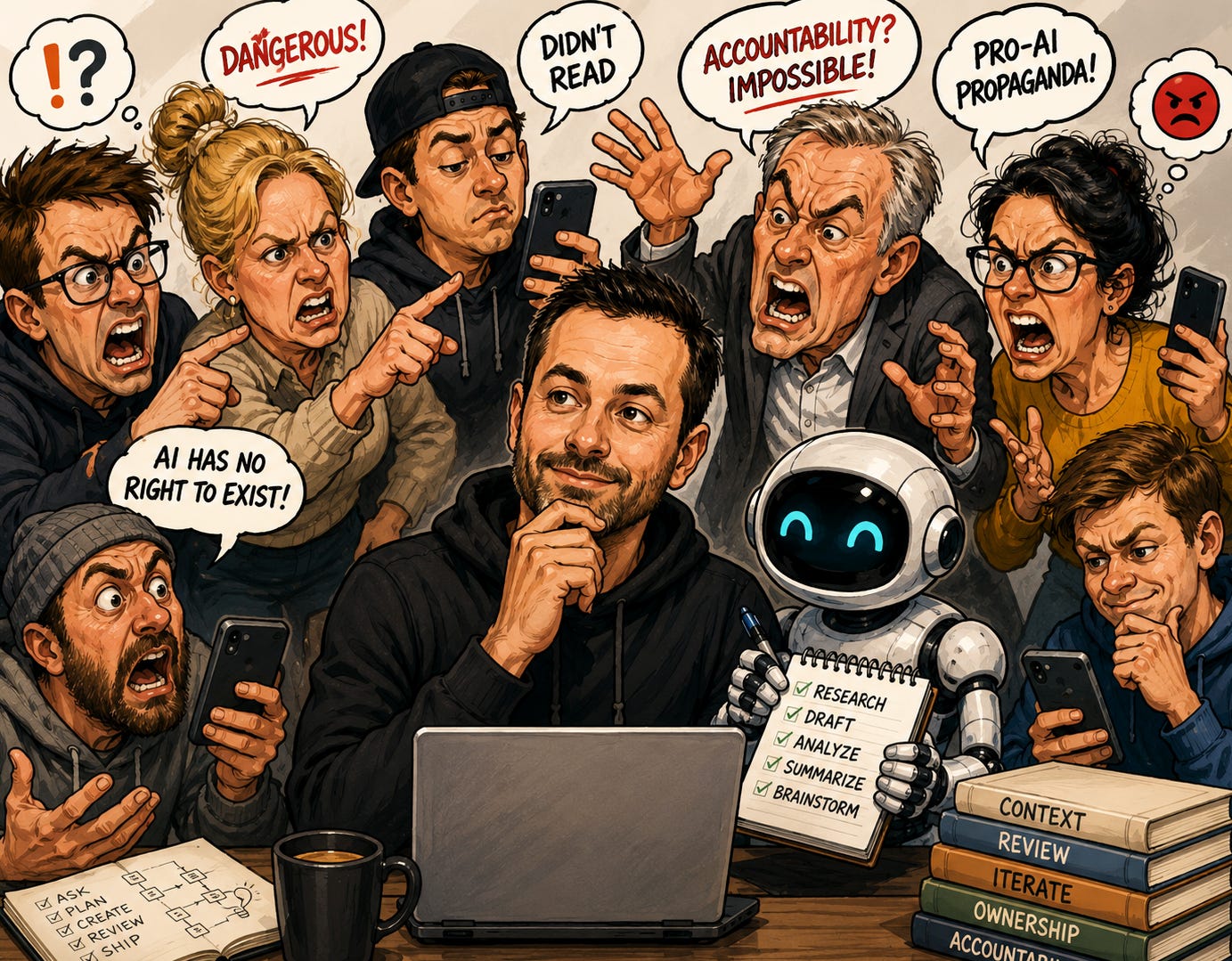

Last week I wrote that AI makes mistakes, and so does every employee you have ever hired. The post triggered exactly the reaction I expected.

Some people agreed. Some got uncomfortable. And a few revealed the real problem without realizing it.

Most of them did not even read the article.

I do not understand everything either. I never claimed to. That is exactly why I open these conversations and share my opinions, not untouchable truths. But there is a difference between having an opinion and making confident statements about something you have not even read.

People Love to Judge What They Do Not Understand

The responses followed a pattern I have seen for years. Confident opinions from people who did not do the reading.

One person skimmed the article, admitted they skimmed it, and then wrote a firm opinion about what it said. Another declared that AI “has no right to exist.” A third called the piece “dangerous” without addressing a single specific point.

Most people read the title. Some read the subtitle. A few made it through the first paragraph. Then they scrolled to the comments and started arguing. And some just needed to drop a “smart” comment to feed the LinkedIn algorithm. The content does not matter. The engagement does.

The article was clearly about accountability staying with the person using the tool. It also included a section about building a second brain to give AI real context instead of letting it guess. You could call that section a second brain ad if you want. Fair enough. Most people never got to it. They reacted to the headline and filled in the rest with whatever they already believed.

This is how most public discussions work. People do not engage with the argument. They react to the feeling the argument gives them. Then they construct a rebuttal for something that was never said.

The article said accountability stays with the person. Multiple people responded as if it said the opposite. They were not arguing with me. They were arguing with a version of the article they invented in their head.

This happens constantly. People form strong opinions based on partial information, then defend those opinions as if they did the work. They do not know what they do not know. But they are certain about it.

Nobody Was Holding Humans Accountable Either

The idea that human work existed inside some clean accountability system is fiction. Of course, in some cases people do get held accountable. But that is not what this is about.

I have been in business for so many years. I have managed teams across development, operations, sales, production, distribution and finance. Accountability was always the weakest link. Not because people are bad. Because accountability is hard.

A developer ships a bug to production. Who is accountable? The developer who wrote it? The reviewer who missed it? The manager who skipped QA? The PM who compressed the timeline?

In most organizations, the answer is nobody. The bug gets fixed. A retro happens. Everyone nods. The same thing happens again three months later.

So when someone says “humans can be held accountable,” what they actually mean is humans can be blamed. Blame and accountability are not the same thing. Blame finds a target. Accountability changes a system.

The person who is actually accountable is always the same. The owner. The manager. The founder. The one who hired, delegated, and signed off. That was true before AI. It is true now.

AI Made Accountability Visible

The accountability gap that always existed is now impossible to ignore.

When a human employee made a mistake, it was buried inside meetings, email threads, and internal processes. Nobody posted it on LinkedIn. Nobody took a screenshot. It was normalized as “part of working with people.”

When AI makes a mistake, it is public. It is screenshotted. It is shared with commentary about how dangerous this all is. The same mistake that would have been quietly fixed inside a team now becomes evidence in a cultural argument.

AI did not create an accountability problem. It exposed the one that was already there.

Certainty Without Experience

The loudest critics of AI accountability were not operations leaders. Not founders. Not people who manage systems and deal with output quality every day.

When you have done that for years, mistakes do not shock you. They feel familiar. You already know how to review, correct, and iterate. You already know that no resource, human or otherwise, delivers perfect output without oversight.

The people most certain that AI accountability is unsolvable are often the ones with the least experience managing accountability in any form. They judge the situation from the outside, apply logic that sounds right, and skip the part where you actually test it against reality.

That is the pattern. Strong opinions, zero reps.

Real Accountability Was Always on the Manager

When an employee makes a mistake, it is the manager’s responsibility. When AI makes a mistake, it is the user’s responsibility. The tool changed. The ownership did not.

Anyone who has run a team knows this. You hire someone. You give them context. You review their work. If you skip the review and something breaks, that is on you.

AI works the same way. You prompt it. You review the output. You ship it or you do not. If you ship garbage, that is on you, not the tool.

The people who understand delegation are thriving with AI. The people who never learned to manage output are struggling. That gap existed before AI. AI just made it obvious.

“Pro-AI” Is Not a Position

Several people called the article “pro-AI.” As if supporting a technology is an ideology.

I am not pro-AI. I am pro progress. And I do not have any problems with Amish people. If you like that lifestyle, go to the bushes. Being against AI is like being against electricity because sometimes the power goes out or can shock and kill. You can hold that position, it’s up to you.

“You Used AI to Write This”

Of course I use AI to polish my text. That is what tools are for.

Every person who left a comment criticizing AI did it on a computer, using the internet, on a platform built by algorithms. If using AI disqualifies the argument, handwrite your comment and send it by pigeon.

The Acceleration Problem Is Real

AI accelerates everything, including bad decisions. That is true.

If your process is broken, AI will break it faster. If your QA is weak, AI will ship more bugs faster. If your judgment is poor, AI will execute poor judgment at scale.

This is not an argument against AI. It is an argument for better management.

Speed without direction has always been dangerous. A fast car with a bad driver is more dangerous than a slow one. But I personally do not plan to go back to horses.

The answer is better drivers. Better process. Better review. Not slower tools.

Yes, AI Is Dangerous

I am not going to pretend it is not. AI is powerful and power is always dangerous. It can be misused. It can cause damage at scale. It can make bad situations worse faster than anything before it.

But so could every major technology that came before. Nuclear energy. The internet. Cars. Firearms. Medicine. All of them dangerous. All of them still here. Because the value was greater than the risk, and we learned to manage them.

The real question is whether we can handle it. And if we cannot, then maybe we do not deserve to. Every generation faced a technology that could end things if used wrong. That is not new. What is new is the speed. But the answer was never to stop. It was to grow up faster.

What Actually Needs to Change

Nobody knows what AI exactly is. Nobody knows what it will become. Not the people building it, not the people using it, and definitely not the people arguing about it in LinkedIn comments. We are all figuring this out in real time.

The question is not “can AI be held accountable?” No tool can be held accountable. A spreadsheet cannot be held accountable for wrong numbers. A CRM cannot be held accountable for lost deals.

The question is: who owns the output?

If the answer is clear, AI is just another resource. If the answer is unclear, you had a problem before AI showed up.

That confidence is not expertise, it is comfort. And comfort has never been a good guide for understanding anything new.